Cut your team's AI bill. Deploy in 15 minutes.

One GPU machine becomes your private AI: SSO, audit, OpenAI-compatible API.

From GPU machine to AI service in 3 steps

The CTO keeps control, employees get a simple URL, and developers keep their tools.

Book an AI cost auditWhen cloud AI becomes a budget line, your GPU becomes an asset.

Your models run on your hardware

Inference is routed to your GPU machine through an outbound tunnel. You keep control over retention and access.

SSO, SCIM, and governance

Magic link to start, SAML/OIDC on Startup, then SCIM and audit exports on Enterprise.

Make dev tools pay back faster

Keep Claude Code, Cursor, or OpenCode in the workflow with a local API that checks model capabilities before routing.

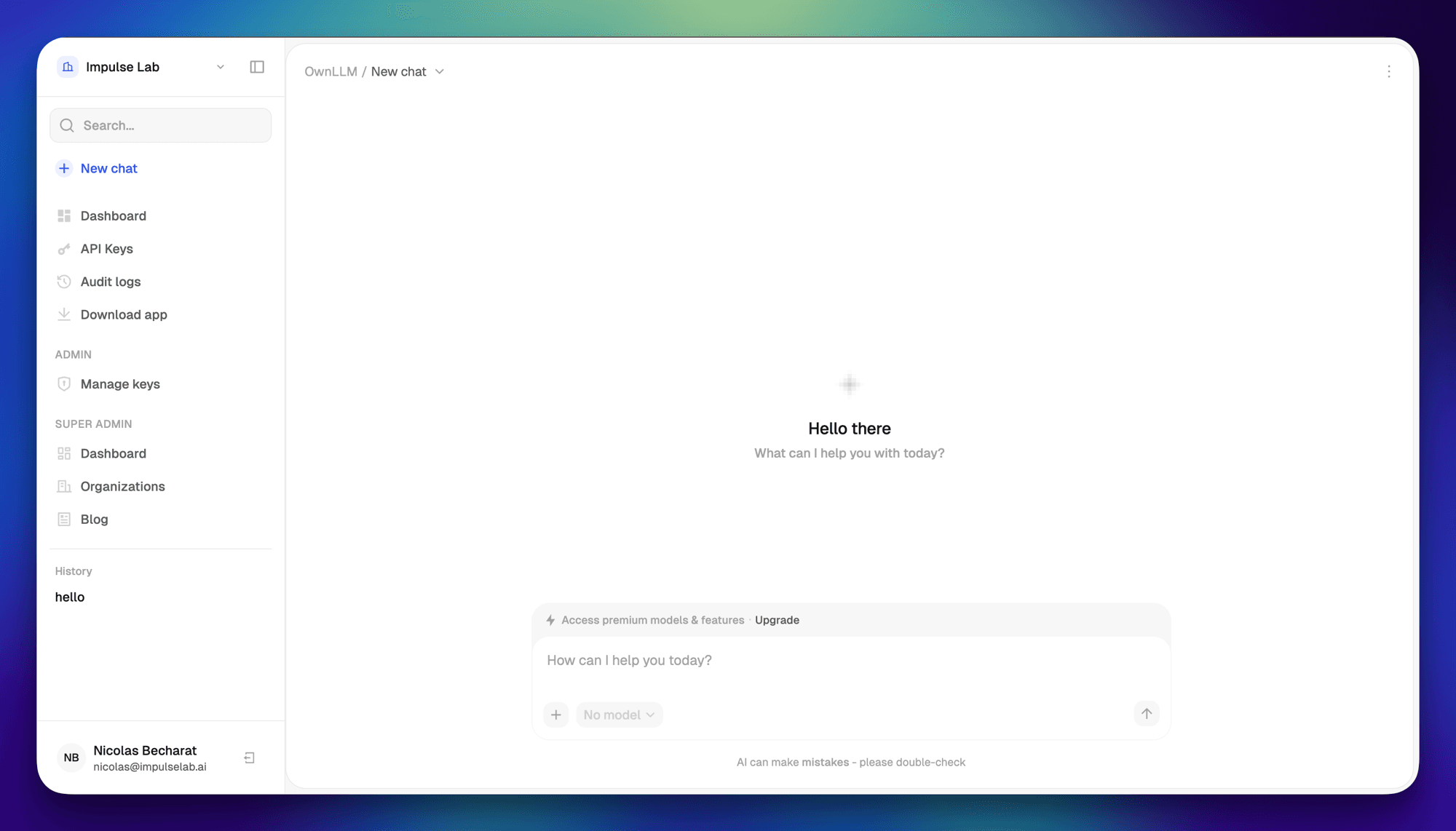

Web chat for non-technical teams

A team URL, company login, and models selected for your actual hardware.

Flat costs, zero seat sprawl

One subscription replaces stacked per-seat AI licenses. Track who uses what and keep your AI budget predictable.

Start small, expose the right model for the job

OwnLLM sells the operational layer: you choose the machine, we deliver access, updates, security, model recommendations, and clear capability labels.

Tool calling is only enabled for models whose Ollama capabilities include tools. Smaller chat models stay available for simple prompts without breaking agentic clients.

Sell local AI without forcing DIY on your teams.

OwnLLM keeps the control plane simple and auditable, while inference and models stay within your machine boundary.

Clear positioning for the DPO

Metadata needed for audit and billing is centralized. Conversation storage policies are explicit and configurable per tenant.

- Outbound tunnel only: no inbound ports opened on the customer network.

- SSO, admin/member roles, SCIM, and centralized revocation depending on plan.

- Hashed API keys, per-model scopes, configurable budgets, and expiration.

- Audit logs separated from content: who, when, model, tokens, and channel.

- Control plane hosted in Europe with DPA and configurable retention.

- Local inference on the customer's machine through a short-lived shared secret.

Flat AI infrastructure pricing that pays back in weeks, not quarters.

Stop adding ChatGPT, Copilot, Cursor, and Claude seats per employee. One subscription, one machine, your whole team.

Solo

For individual devs who want a private OpenAI-compatible endpoint on their own machine.

Replace your OpenAI API key with your own hardware — up to 3 users

- Up to 3 usersLive

- 1 paired machineLive

- 1 active modelLive

- Magic link authLive

- OpenAI-compatible APILive

Team

Private AI for small teams starting with one machine.

Replaces ~10 ChatGPT Business seats

- Up to 10 usersLive

- 1 paired machineLive

- 3 active modelsLive

- Magic link authLive

- OpenAI-compatible APILive

- Chat webBeta

Startup

The target plan for SMBs replacing stacked AI seats.

Replaces ~50 ChatGPT Business seats or stacked ChatGPT + Copilot subscriptions

- Up to 50 usersLive

- 8 active modelsLive

- SSO SAML / OIDCBeta

- 90-day audit logsSoon

- API budgets and scopesLive

- Capability-aware model routingLive

Enterprise

For organizations that need compliance and priority support.

Replaces ChatGPT + Copilot + Cursor Teams stacks at 80–200 employees

- Users on quoteAsk us

- 20+ active modelsLive

- SCIM 2.0Soon

- 12-month audit exportSoon

- Custom domainAsk us

- 4h support and compliance servicesAsk us